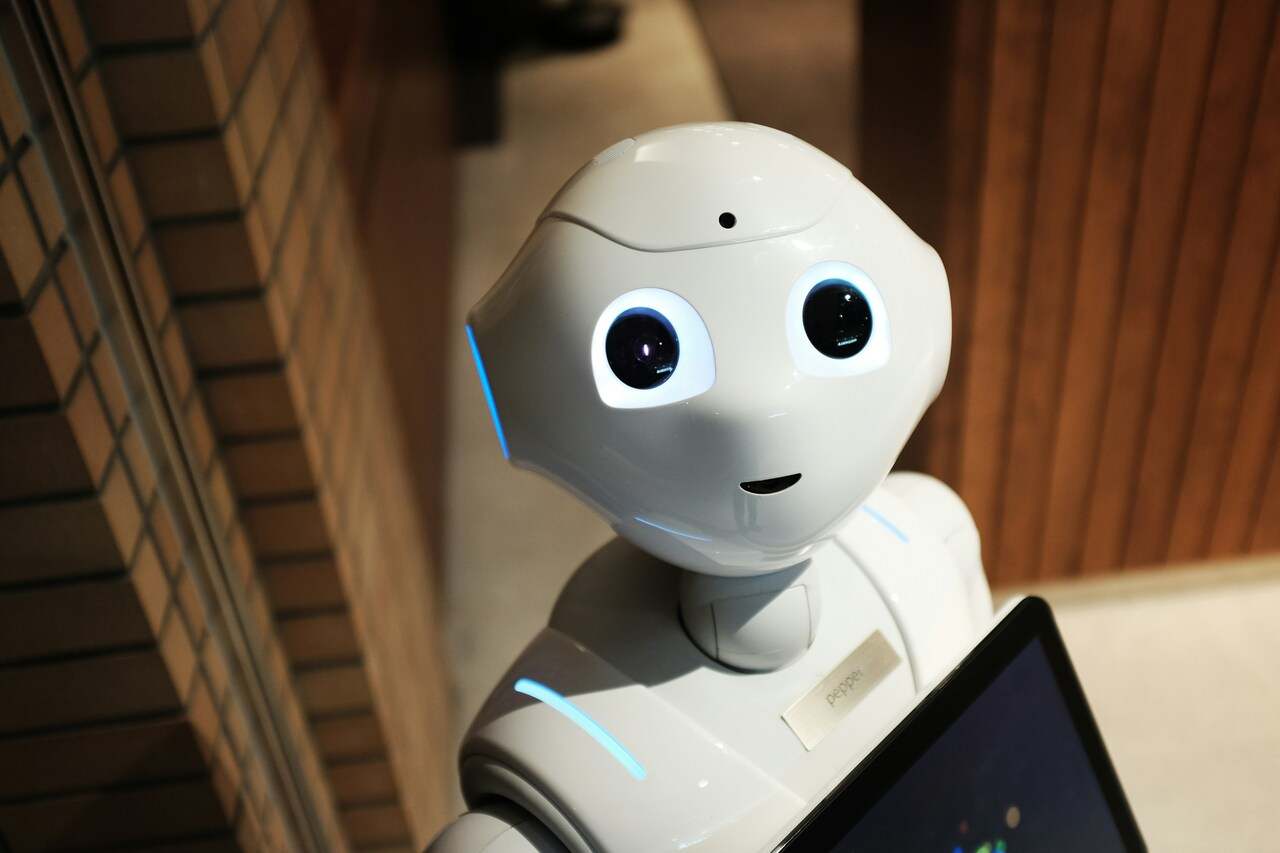

Many people still think of artificial intelligence as a chatbot that helps write an email, generate an image, or finish a school assignment. But AI no longer lives only inside a ChatGPT window. It quietly shapes the movie Netflix recommends after work, the next song Spotify plays, and the posts that appear near the top of your social feed.

That is where the problem begins. Almost everyone now interacts with artificial intelligence in some form, but not everyone can recognize it. According to a new study, this may be creating a new kind of digital divide—one that favors people with higher levels of education and income.

People Who Understand AI Have an Advantage. The Study Suggests That Advantage Is Not Evenly Shared

Researchers from Hong Kong Baptist University analyzed data from more than 10,000 American adults. They were not only interested in who uses AI tools directly. They wanted to know something more subtle: who can tell when artificial intelligence is working behind an ordinary feature in an app or online service.

The gap was clear. People with higher education and higher income were more likely to recognize AI in everyday digital services, feel more familiar with it, and use it more often. This is not just a divide between people who open ChatGPT and people who do not. The divide begins much earlier, with the ability to notice that an algorithm has entered the picture at all.

“Closing the AI awareness gap is essential,” says Professor Sai Wang, lead author of the study.

Wang warns that if AI awareness is concentrated mainly among people with higher incomes or more education, existing social inequalities may become even stronger.

A simple example is the job market. A job applicant who knows that employers may use AI to screen resumes can prepare their CV with that in mind. Someone who does not know this may lose an opportunity without ever understanding where things went wrong.

The real difference, then, is not only whether someone uses artificial intelligence. It is whether they can recognize AI when it operates quietly, as an invisible layer inside ordinary digital services.

AI Is Quietly Working inside Everyday Apps. Many Users Treat It like a Normal Feature

When Netflix recommends a show that genuinely catches your attention, it may not be a coincidence. When Spotify seems to pick the right next song for your mood, that is not just luck either. And when similar videos, ads, or posts keep appearing in your feed, somewhere in the background there is a system learning from your behavior.

This is one of the biggest concerns raised by the researchers. AI is no longer something you always open deliberately, like a visible chatbot. More often, it is built directly into services people use automatically. As a result, many people interact with artificial intelligence every day without thinking of it as AI at all.

Wang points to recommendation systems on platforms such as Netflix and Spotify. These systems suggest content based on a person’s tastes, yet many users may still see the results as “random or neutral” rather than AI-driven.

In the past, the digital divide was usually measured by access. Did someone have a computer? Internet? A smartphone? With AI, the problem is different. You can have the technology in your pocket and use it every day, but if you do not understand where it is acting on you, you are still at a disadvantage.

You May Use AI Every Day. What Matters Is Whether You Understand Where It Is Influencing You

One of the most interesting findings was not simply that more educated people use AI more often. The research also suggests that using AI does not automatically mean a person can recognize it. You can click recommended videos, rely on email filters, or accept app suggestions every day and still not realize that artificial intelligence is involved.

The stronger factor was familiarity. In plain terms, people who felt at least somewhat informed about AI were better at noticing it in everyday situations. The issue was not just exposure to technology. It was the ability to name what was happening behind the screen.

The data also showed that education was a stronger predictor than income. A person does not have to be among the wealthiest to have an advantage in the age of AI. If they have stronger digital skills, follow technology news, and understand how algorithms work, they can move through this environment with more confidence than someone who uses the same apps but treats their recommendations like a black box.

If You Do Not Know an Algorithm Is Working on You, It Is Easier to Believe What It Shows You

This divide is not just an issue for academics or schools. In everyday life, it can shape who moves through the digital world with an advantage and who simply accepts whatever apps serve up on the screen.

A person who understands AI is more likely to notice that a recommendation may not be neutral. When they see a suspicious video, they may ask whether it could be a deepfake. When an ad appears, they may understand it was not shown by accident. And when they build a professional profile or write a resume, they know it may be read not only by a person, but also by automated software looking for certain words and patterns.

Users who do not notice these mechanisms are in a weaker position. They see posts, ads, product recommendations, and videos, but treat them as the natural output of the app. They may not realize the system is learning from their clicks, watch time, purchases, and reactions. That makes it easier to fall into an information bubble, believe fake content, or trust what an algorithm keeps placing in front of them.

Giving People Technology Is Not Enough. They Need to Know What AI Is Doing with It

That is why simply talking about internet access or modern smartphones is no longer enough. Many people already have those things. The deeper problem is that they use services that sort information, recommend content, filter messages, and predict behavior, without realizing that AI is part of the process.

The researchers argue that targeted AI literacy is now necessary. Not in the form of complicated programming courses, but through practical examples from daily life. People should understand why a social platform shows them certain posts, why an online store recommends specific products, or why an app keeps serving content designed to hold their attention longer.

Wang and her colleagues suggest outreach campaigns, community workshops, and school curricula that explain AI not as a distant technology for developers, but as something already embedded in everyday apps. Otherwise, society may end up in a strange situation: almost everyone has the tools in their hands, but only those who already have an advantage understand the rules of the game.